- the essence of your voice

- why context and contingencies are of high importance and

- the value of focussing on the basics first before you move on to differentiate yourself and delight your users.

Are involved in the conversation design process? Then this article is for you! How to design the actual conversation? The conversation the user has with our brand, in order to deliver the best user experience we possibly can.

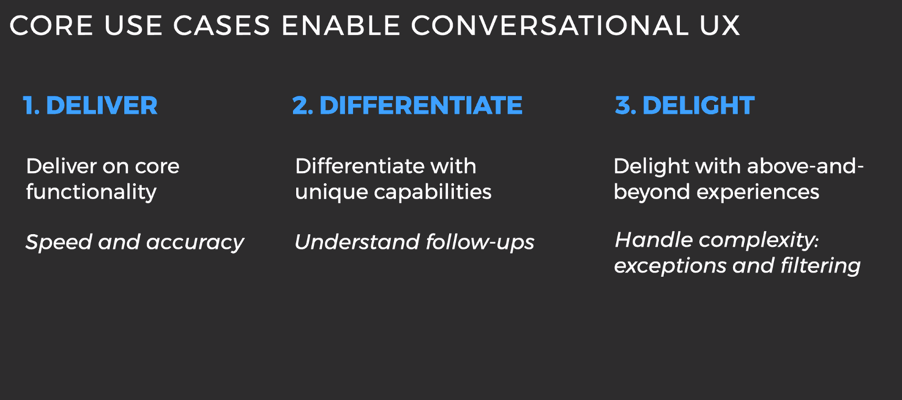

To give a little recap, conversation design consists of three parts:

- Deliver, get the basics right

- Differentiate, more natural ways of interactions

- Delight, surprise the user.

(Source: Presentation Soundhound during Voice of The Car Summit, 8 April, 2020)

In this article, we walk you through the first stage in more detail. This stage is key in order to form the base of your conversation.

1. Deliver: get the basics right

The first step may seem easy: choose your invocation name. That’s the word the user has to say in order to access your voice app. I thought of this as not too difficult to do, but boy oh boy how wrong I was. I named the invocation name JUKE. Pretty logical since the brand itself was called JUKE, the free radio-, podcast-, and music platform of the Netherlands. The first issue we encountered was that we chose an English name and the Google Assistant is in Dutch. Now, reportedly the name Beyonce, a singer and 24 time grammy winner, took about 18 months for the Google Assistant to understand it. So dancing to one of her hits such as Single Ladies via voice was an issue in the beginning. You can probably imagine that we were not really able to wait for 18 months. Luckily, Google helped us with the understanding of the name JUKE and linking it to the right Google Action. But it indicated that we have to be careful with the names we use, whether it is our own brand or not. Be sure your brand name can be pronounced and understood correctly in your language.

Furthermore, you have to realize in which context the user says your brand name out loud. Is it in a public space? Does your voice app include banking details or other personal information? Then your user might only use it at home in their living room. So your Voice app will mainly be used in closed environments.

Right before we start shaping the conversation, I recommend you to take a look back at your persona and the context that you are designing for. Make sure you use the right words and your brand comes across the way you intend it to be.

Yes, we understand you, the happy path

Let’s start with the exciting part, the conversation! In case everything goes well and the user gets exactly what he (or she) wants, he is on the so-called happy path. It would mean that if she asks “Hey Google, ask JUKE to play Radio 538” it would respond back with “Got it, now playing Radio 538”.

You have to know what the ideal path is the user has to go through in order to finish the conversation. If you give information about mortgages, then you might want to create more steps the user wants to go true. The bigger your voice app grows the more happy paths you will have. Continue to improve your happy path with input from the user. If they use different utterances (words/sentences) than you expected, add them to the …. We noticed for instance that users asked for local radio stations. So we made sure the next time they use it, it works.

Owww no something went wrong, the error prompts.

Not every conversation will go the way you planned it to go. Chances are that the assistant just doesn’t understand your user. This is the exciting part for me!

How do you make sure your user ends back on the right path? Several kinds of error prompts can help you with that. These are messages you give to the user to get them back on the right path. Thrilling isn’t it?

In all error prompts the user gets three prompts back. For a podcast and radio voice app that could be first, ‘Did you say you wanted to hear a podcast or radio station?’. Secondly ‘One more time, which specific podcast or radio station did you mean’ and thirdly ‘You can ask me for radio station by saying “Hey Google, play radio 538” or the name of the podcast. With what can I help you?’. This is to lower their stress level and to give the user more guidance with each following error prompt. You do not want to have your user screaming at the Voice device in question and have a terrible brand experience. We want the user to come back and chit chat with us for as long as they like. In case the user hasn’t given a response the system understands we will close the conversation after three attempts, such as ‘I am sorry I am not able to help you. I will send you a link to your phone so you can look into the mobile app. Talk to you soon’. In real life you will end a conversation if you do not know the answer as well, so why not in Voice Design?

Kinds of error prompts

The first error prompt that might occur is the ‘No Input’ prompt. This happens when we do not hear anything. It’s like you are talking to your Google Home and then your doorbell rings. It’s the mailman with your package. You have to rush to the door because he won’t be waiting for you long. By not responding, you, as a user, cut off the conversation, and the voice assistant is left with no input.

The first message we send back is a rapid reprompt, a quick message. Depending on where the user left of it could be ‘Can you speak a bit louder?’. It is meant to quickly let the user repeat what they said before. Leave the microphone open for a bit and wait for a response. If the user still doesn’t respond give them a reprompt with a bit more information, an escalating detail. Such as ‘Please tell me the name of the artist, the song or a bit of the lyrics and I will put it on for you’. The final message is the one where you remind the user what the possibilities with your voice app are. Think about ‘You can ask me to play songs, radio or podcast. By telling me the title, artist name or a piece of the lyrics. With what can I help you?’. If the user still doesn’t respond after this, end the conversation.

Next to no input there could be a no match. This is where the user has said something, yet we are not quite sure what they mean. Did they use an utterance with which we are not familiar yet or did they say something completely off topic, probably, because they were talking to someone else in the room. Just like the no input prompt it is useful to first use a rapid reprompt ‘Was that a yes or no’ for example, then an escalating detail and end with a message where you explain what your voice app can do. At JUKE we had 3 no match prompt types. One for general no inputs, one for radio and one for podcasts. Since a lot of the radio stations have radio in their name or podcasts have podcast in their name, we used this to our advantage. We had no clue what the user was saying but we knew they wanted a podcast, so they would only get podcast related suggestions to help them. Think about the context, is this the case too in your voice app? Is there a word like podcast or radio which is used in many of the events you want to trigger and can it benefit the user by knowing putting them into a ‘no input radio/podcast’ box?

The third error prompt is disambiguation. This is a response from the user that we did understand but we need more context. Like we ask them ‘what song do you want to hear’ and they respond with ‘a song’. In case we do not know the user and their preferences yet, we need to find out more. What genre do they like or which artist? This info can help us to determine what song they are looking for.

Confirmations, to be understood or not to be understood

Your best friend mentions the new best restaurant in town and you are not quite sure if you got the name right. So you mention the name and your friend confirms it. This is an explicit confirmation: we have to give a response to the user to confirm if we understood them correctly. This is measured by your confidence level. If you have a banking voice app your confidence level must be quite high before you give an answer. In the case of a music voice app it could be a bit lower, since this isn’t about sensitive information.

So ‘Okay great, you want to transfer 100 euro to Mr Janse, right?’, would be an example of an explicit confirmation. We first state that we understood them after which we ask a question to make sure we send the 100 euro to the right bank account. An implicit confirmation is used when we know quite certain that we got the right answer. ‘Got it, now playing Radio 538’, would be an example. We first mention that we understood them after which we say that we are going to complete the action the user has asked for. Again, the height of the threshold is depending on your business. In general we say that when an answer is about sensitive information or money matters, we need to be extremely careful and therefor set a high threshold.

Up next: Differentiate & Delight.

We now know:

- The basics of voice design (define your goal, define your target audience and define the scope)

- You’re also aware of the importance of crafting a proper persona which fits your brand

- You know the essence of your voice app, why context and contingencies are important, and to make sure you get the basics right first before extending or differentiate yourself and delight the user.

- Finally you know the basics of your conversational flow. What to do when everything goes right, when it goes wrong, or when we need a bit more information.

Next up we will go into depth how we can differentiate the conversation and delight our users.

Stay tuned!

Ook interessant

DDMO 2025: AI redefines marketing function, sector matures amid growing pains

DDMO 2025: Marketingfunctie onder invloed van AI, sector balanceert tussen adolescentie en volwassenheid